Mar 09, 2026

The Pentagon did not want to put in their contract with Anthropic that it would not use AI for mass surveillance of Americans, restrictions on autonomous weapons or even use of the AI for social credit scoring. Open AI secured the contract, and did not demand any safeguards for the technology. Open AI leading Robotics Scientist resigned over her concerns.

Should we be concerned that Trump’s Pentagon wants to use AI for mass domestic surveillance with AI being able to decide if it should use lethal force against us?

Supposedly Open AI stated that their software cannot be used for these areas, but if that is true, why would their leading scientist resign over this moral issue?

OpenAI Hardware Leader Caitlin Kalinowski Resigns Over Pentagon Deal as Benjamin Bolte Joins

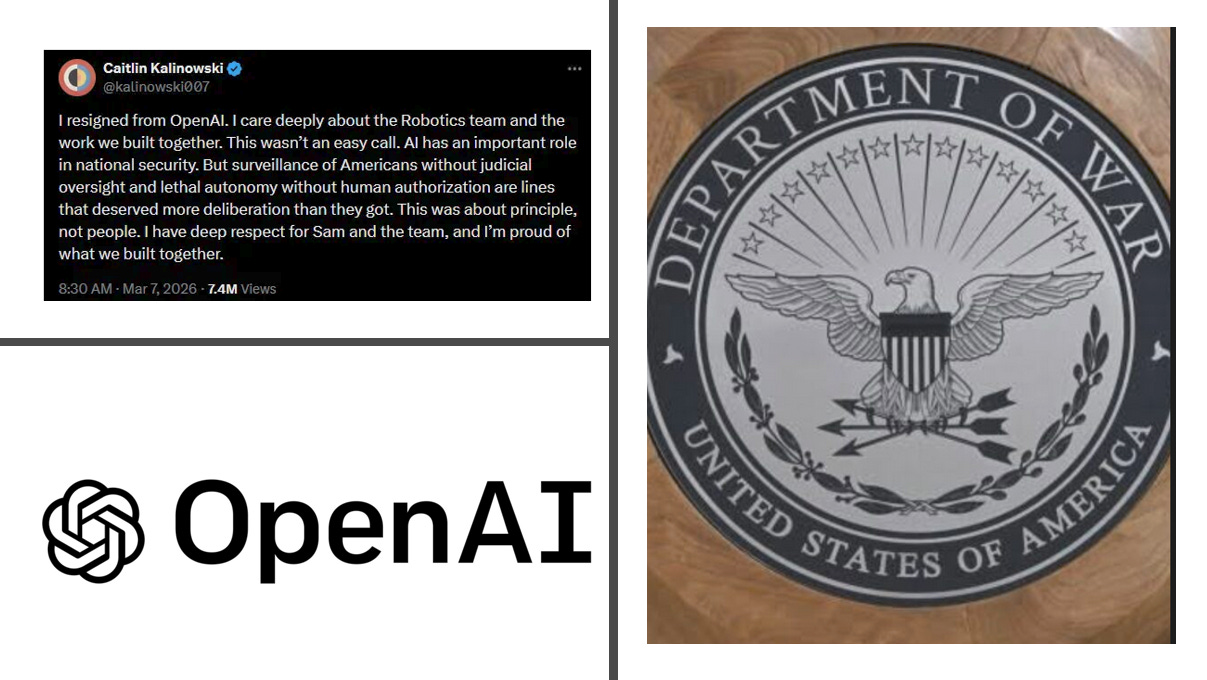

In a weekend of significant leadership shifts at OpenAI, Caitlin Kalinowski, the executive overseeing the company’s hardware and robotics efforts, announced her resignation on March 7, 2026. Her departure is a direct response to OpenAI’s recent agreement with the U.S. Department of Defense, a deal that has sparked internal debate over the ethical boundaries of AI in national security.

The resignation coincided with the announcement that Benjamin Bolte, the former CEO of the now-defunct K-Scale Labs, has joined OpenAI. While the two events appear unrelated in purpose, they highlight a period of intense flux for the company’s physical computing ambitions.

Principles Over People: Kalinowski’s Exit

Kalinowski, who joined OpenAI in late 2024 after a high-profile career leading AR hardware at Meta and product design at Apple, cited “governance concerns” as the primary driver for her exit. In a series of posts on X and LinkedIn, she emphasized that while AI plays a vital role in national security, the speed and terms of OpenAI’s Pentagon contract crossed critical lines.

“Surveillance of Americans without judicial oversight and lethal autonomy without human authorization are lines that deserved more deliberation than they got,” Kalinowski stated. She further clarified that her decision was about “principle, not people,” maintaining respect for CEO Sam Altman and the robotics team.

The controversy stems from OpenAI’s decision to deploy its models on a classified government network. This move followed the collapse of similar negotiations between the Pentagon and Anthropic, which reportedly sought stricter limits on domestic surveillance. Critics within the industry have characterized OpenAI’s swift agreement as “opportunistic,” a sentiment Altman later acknowledged regarding the deal’s public rollout.

OpenAI has defended the partnership, stating that its “red lines” explicitly preclude the use of its technology for autonomous weaponry or unauthorized domestic surveillance. However, Kalinowski’s departure suggests that these internal safeguards were not viewed as sufficiently established before the deal was finalized.

OpenAI reveals more details about its agreement with the Pentagon

After negotiations between Anthropic and the Pentagon fell through on Friday, President Donald Trump directed federal agencies to stop using Anthropic’s technology after a six-month transition period, and Secretary of Defense Pete Hegseth said he was designating the AI company as a supply-chain risk.

Then, OpenAI quickly announced that it had reached a deal of its own for models to be deployed in classified environments. With Anthropic saying it was drawing red lines around the use of its technology in fully autonomous weapons or mass domestic surveillance, and Altman saying OpenAI had the same red lines, there were some obvious questions: Was OpenAI being honest about its safeguards? Why was it able to reach a deal while Anthropic was not?

So as OpenAI executives defended the agreement on social media, the company also published a blog post outlining its approach.

In fact, the post pointed to three areas where it said OpenAI’s models cannot be used — mass domestic surveillance, autonomous weapon systems, and “high-stakes automated decisions (e.g. systems such as ‘social credit’).”

The company said that in contrast to other AI companies that have “reduced or removed their safety guardrails and relied primarily on usage policies as their primary safeguards in national security deployments,” OpenAI’s agreement protects its red lines “through a more expansive, multi-layered approach.”

“We retain full discretion over our safety stack, we deploy via cloud, cleared OpenAI personnel are in the loop, and we have strong contractual protections,” the blog said. “This is all in addition to the strong existing protections in U.S. law.”

OpenAI’s Pentagon deal raises new questions about AI and mass surveillance

OpenAI’s decision drew criticism from many AI researchers and tech policy experts, even though OpenAI said it had achieved limitations in its agreement around surveillance of U.S. citizens and lethal autonomous weapons that Anthropic wanted in its contract but which the Pentagon had refused.

One of the key points of contention was over domestic mass surveillance. Experts have long warned that advanced AI is capable of taking scattered, individually innocuous data—like a person’s location, finances, search history—and assembling it into a comprehensive picture of any person’s life, automatically and at scale. Anthropic CEO Dario Amodei has said that this kind of AI-driven mass surveillance presents serious and novel risks to people’s “fundamental liberties” and that “the law has not yet caught up with the rapidly growing capabilities of AI.”

But while OpenAI said in a blog post it had reached a deal with the Pentagon that its technology would not be used for mass domestic surveillance or direct autonomous weapons systems, the two hard limits that Anthropic had refused to drop, some legal and policy experts have raised questions about a potential gap in the law.

Humanity United Now – Ana Maria Mihalcea, MD, PhD is a reader-supported publication. To receive new posts and support my work, consider becoming a free or paid subscriber.

Leave a Reply